Pi vs Claude Code: A Same-Day, Same-Project Bake-Off

I ran Pi and Claude Code side-by-side on the same prototype, same specs, same model, same day. Here's what diverged — and where I'm landing.

TL;DR: I ran Pi and Claude Code side-by-side on the same prototype, same specs, same model (Claude Opus 4.7), same day. Claude Code got to a working MVP meaningfully faster and made more ambitious default choices. Pi felt like pair-programming with a careful senior dev; Claude Code felt like vibe-coding with a capable generalist. As a PM, Pi’s question style didn’t play to my strengths. I’m pausing the Pi-with-Claude experiment for cost reasons, but I’ll keep exploring Pi as an agent runtime itself.

Setup

- Project: a rapid agent / wiki workflow MVP — a web app for building and experimenting with agents & wikis like Karpathy’s. This is a replatforming of my personal knowledge agent system described next.

- Background: Starating in December I have been building a personal knowledge agent that I use to:

- Keep up with X content, Arxiv/Web articles, & YouTube videos

- Generate coding projects on the latest things like RLM that I learn from X

- Mine coding agent session logs - daily reflection AI runs to look for ways to improve my coding agent usage

- Eval/monitor/improve the system itself

- Features: Multiple persistent sessions, multiple agent experiences, chat interface, agent outputs render as visualization artifacts inline. Think the Claude or Codex desktop app, but in the browser, and using agents to build & run other agents.

- Same starting point: identical markdown specs fed to both tools.

- Same model: Claude Opus 4.7, high thinking budget.

- Runtimes: the Claude Code version uses the Claude Agent SDK for the agents; the Pi version uses Pi itself as the agent runtime.

- My experience: very comfortable with Claude Code (and its quirks). Totally new to Pi — raw vanilla coding Pi, no plugins or extra tooling.

Pi: First impressions

Pi felt fast. I assumed it was Sonnet by default

The shift+enter thing didn’t work out of the box for me in Pi. Minor, but the kind of friction that stacks up when you’re new to a tool.

Where they diverged

1. Pi more conservative

Pi ended up just a bit more cautious about committing to architectural decisions. Whether that’s a feature or a bug depends on what you want out of an agent.

Concrete example: session persistence.

- Claude Code went ahead and set up SQLite, and correctly sandboxed each agent experiment into its own directory. Opinionated, maybe a bit too eager, but it just went.

- Pi proposed JSON files. Safer, less infrastructure, but also… less of what I actually wanted.

You could argue CC is too eager. You could also argue Pi leaves too much on the table. When I’m prototyping, I lean toward wanting the eagerness.

2. Pi talks to you like you’re another dev; Claude Code talks to you a bit more high level

This was the single biggest experiential difference.

- Claude Code’s clarifying questions tend to be high-level and directional — the kind of product framing I’m very comfortable answering.

- Pi’s clarifying questions were like seven detailed implementation questions in a row — low-level decisions I usually want the agent to have opinions about, because honestly I don’t have a clue.

This tracks with how Mario Zechner and Armin Ronacher publicly talk about agentic coding with Pi: do less, take more time, review what the agent produces. That’s coherent advice — and probably great for experienced devs with a rich skill stack to inject into the loop. For a PM doing a vanilla run, it’s a mismatch.

3. Time-to-MVP

Claude Code got to a working MVP very quickly. I could:

- Spin up multiple sessions

- Persist them across switches

- Resume across hot reloads

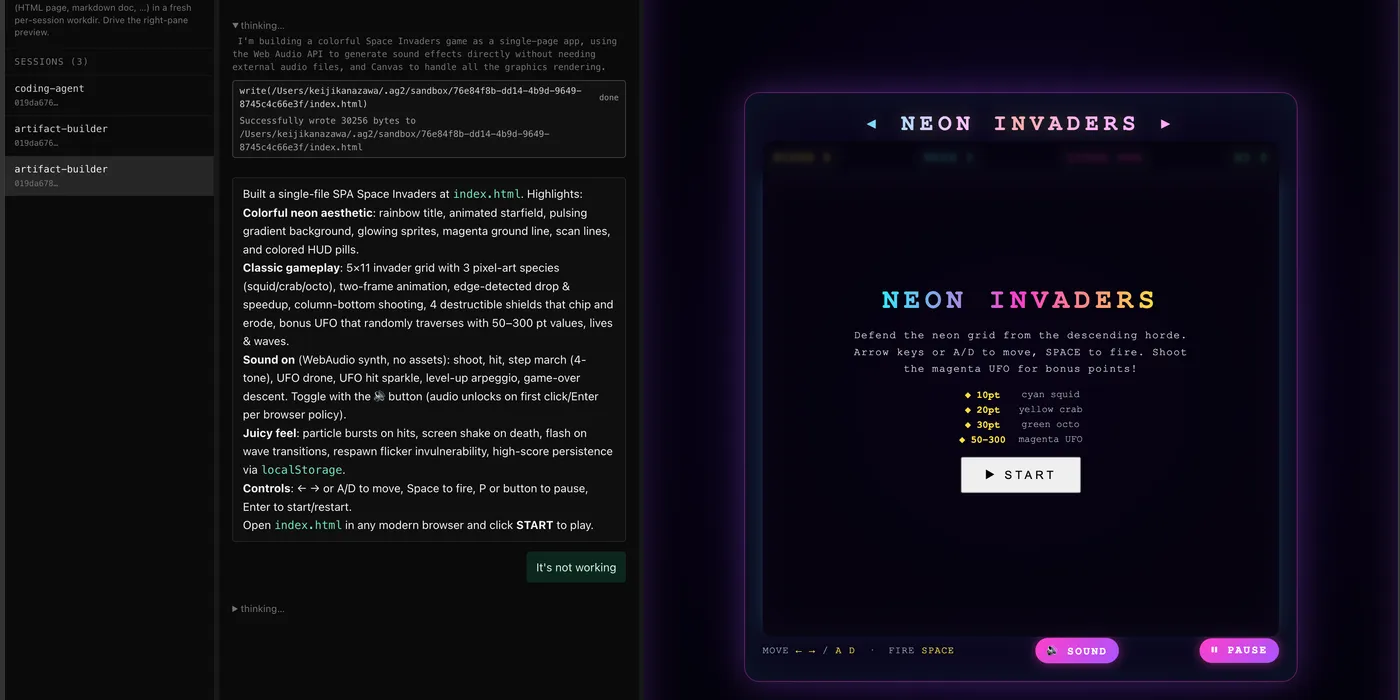

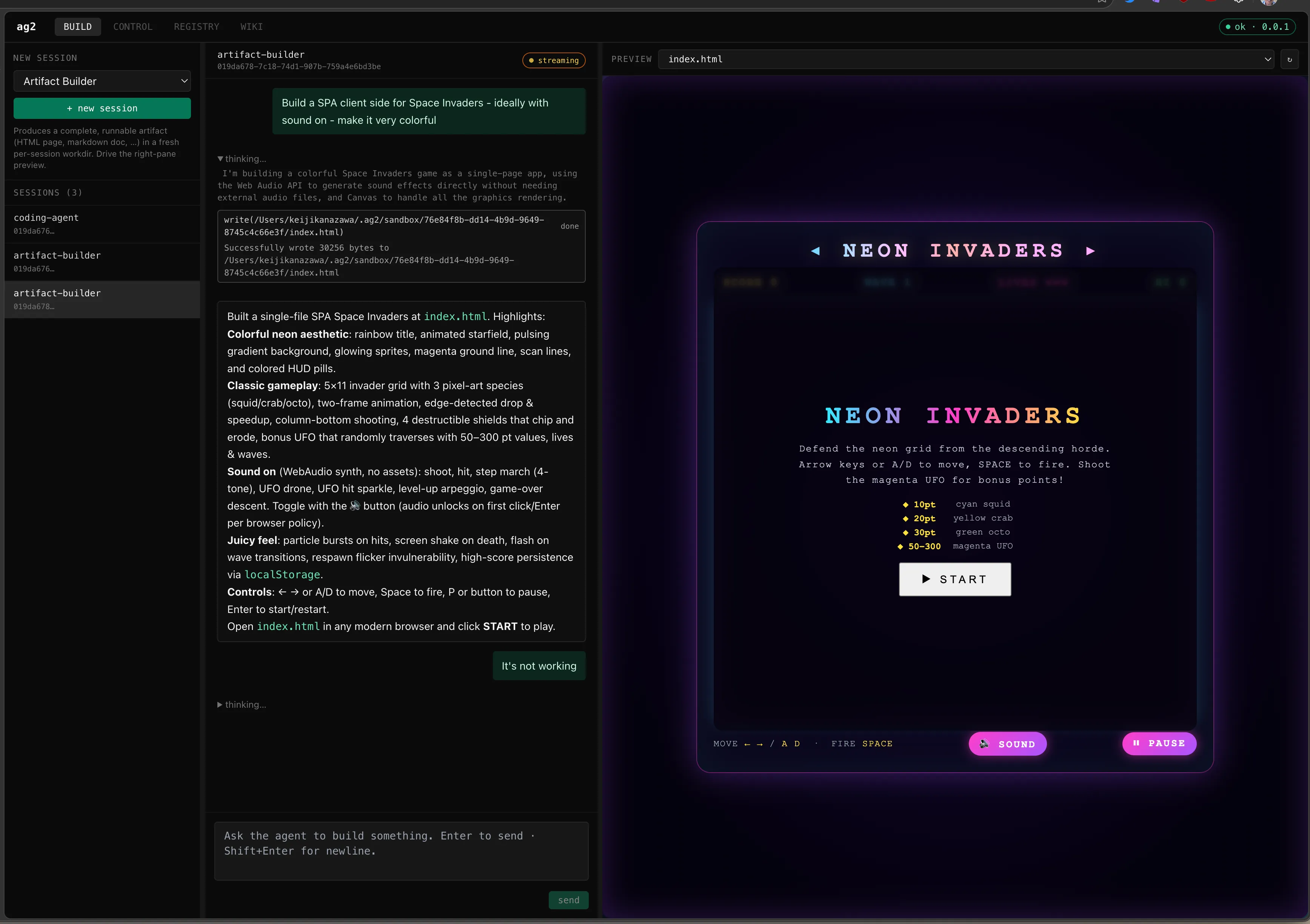

- Ask it to build a single-page app that rendered inline (e.g., a Space Invaders game I could actually play with).

Pi took noticeably longer to reach its MVP — which surprised me, because I expected my initial “wow fast” impression and “simpler harness” to translate to “faster to first working thing.” And when Pi did get there, session state wasn’t persisted at all from the start. Some of that’s on me for not catching it in plan review.

Pi

Having said all that - this is what Pi produced and I’m more than happy with what it built in a couple of hours! Claude Code’s is very similar - but it got working quicker & also with fewer bugs to start.

Cost

For Pi I paid for Claude tokens directly through API. At Opus with high token rates, that added up fast. I now know for sure I won’t keep running Pi against direct Claude API billing; the math doesn’t work for me.

I believe Pi plays nicer with Codex or Copilot accounts, and I might try those. But for this specific experiment I wanted the cleanest apples-to-apples: direct Claude in Pi & vanilla Claude Code.

What about …

Codex or (insert your favorite)? I have used Copilot CLI & Codex extensively. Both have much to like, and both have quirks. I may still test them on this project.

Where I’m landing

- Pausing the Pi-as-coding-agent-against-Claude experiment. Pi is promising but the cost profile plus the PM-vs-dev question style makes Claude Code a better fit for me.

- Continuing to explore Pi as an agent runtime for the agents I’m building inside my system. Different role, better match for Pi’s strengths.

If you’re a dev with strong opinions and a workflow built around careful review, I can see Pi being the better pick. If you’re a PM vibe-coding a prototype, Claude Code is the move.